It's everywhere... AI is taking over HR and employment practices, and there is cause for concern for business owners and employees alike. Society in general is at risk, and many people a lot smarter than me are already espousing the very real concerns that we are already faced with. AI-generated imagery and reports on social media is making it harder to separate truth from fiction and fact checking seems to be a thing of the past entirely. The misinformation and manipulation that AI can support is really quite scary. The capability to generate realistic deepfakes makes it so much easier for people to spread disinformation and erode trust.

But what about AI in your business?

What you should start thinking about:

Firstly, across the board, many employees have genuine concerns that their employment may be in jeopardy, especially people who perform repetitive or creative tasks where AI has already proven useful, such as in graphic design. Many articles today suggest that as many as 25% of jobs could be replaced by AI.

Now is the time to start working with your team to discuss how AI might be used productively and appropriately in your business. There may well be areas where the use of appropriate AI tools can free your valued team members from routine work, allowing them to focus on tasks and projects that require skills such as critical thinking or an innovative approach.

Include your team in decisions about where and how AI can be deployed inside your business. Working closely to realign your people with new tasks and responsibilities will help reassure them of their value and ongoing employment. The last thing your business needs in these uncertain times are team members who feels anxious and worried about their future. Your business will continue to flourish when people remain engaged, motivated, included and involved in the way your business evolves.

Secondly, employers also have concerns, especially about recruitment. It has become all too common to see cover letters and CVs wholly generated by AI. It’s getting harder to shortlist candidates and schedule interviews to meet with the most suitable people. This leads to time-wasting and genuine concern about the validity of employee qualifications, knowledge and skills to perform the role. It has become even more critical for businesses to include capability assessments in their recruitment process to help with true understanding of a person’s abilities.

Outside of the concerns, many businesses are considering how they can use AI in their business to streamline efficiencies, improve professionalism and reduce costs. While it is always good business sense to review what tools exist to assist you in these endeavours, it’s important to really think about where not to use AI…

Where Not to Use AI: People-Related Matters

AI in Recruitment: Why It Gets It Wrong

Sure, there are some software tools that can assist businesses and managers to shortlist candidates for jobs, but more and more we are learning the dangers of these software tools and the newer AI models.

They often learn biases. The main case study on this was the Amazon ‘Sexist AI’ Case 2014-2018, where the algorithm was trained on 10 years of CVs submitted to the company predominantly from men, reflecting the male-dominated nature of the tech industry at the time. The software taught itself that male candidates were preferable, so it specifically downgraded resumes containing the word ‘woman’ or ‘women’s’, and penalised graduates from single-sex (female) colleges. As a result of the case, Amazon edited the programs to make them neutral to those specific terms but could not guarantee the system wouldn’t find other ways to be biased, so they ended up disbanding the project.

The Amazon case is not the only one, however. Other ones of note include the ‘Workday Screening Lawsuit’ which was a class action lawsuit that alleged Workday’s AI screening tools discriminated against applicants based on race, age and disability, and the LinkedIn Job Matching case, where LinkedIn discovered that their recruiting algorithm was penalising female candidates by preferring male candidates based on their historical click patterns and job-seeking behaviours.

The takeaway: avoid AI involvement in your recruitment practices. Not only will you be safe from biases, but you also won’t miss out on top talent or candidates who have been creative and unique in their application!

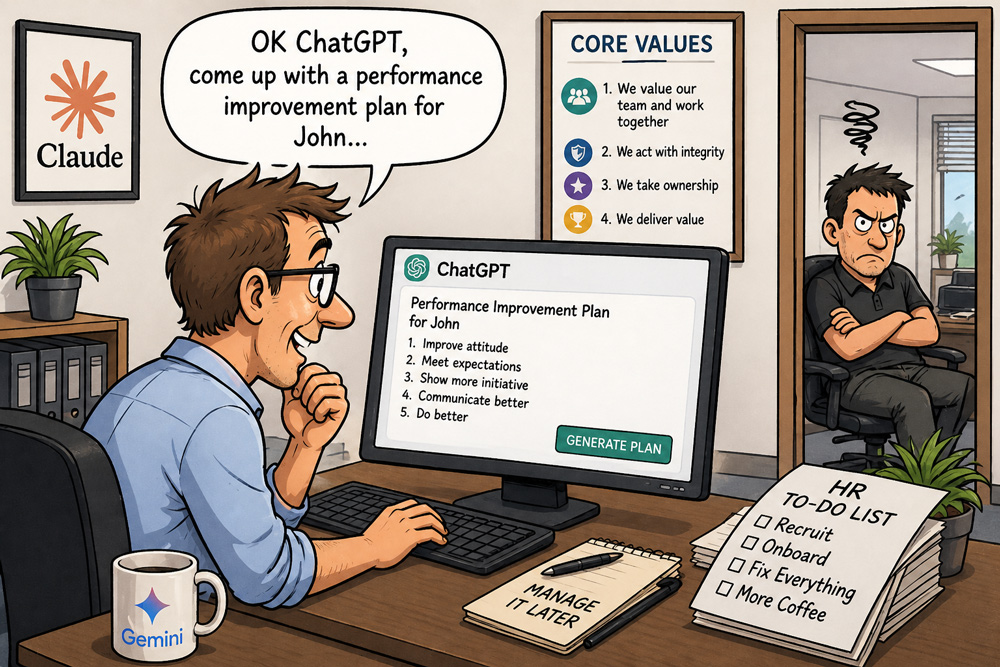

AI for Performance Management: Why People Need People

When you are managing people, there are always going to be performance and behaviour concerns. Here’s the thing; people deserve to be managed by other people, not robots. So, if you're creating a performance improvement plan or a letter regarding a redundancy process or performance matter, please write it yourself (or get the HRSS team to do it for you!). AI does not know your people, cannot tailor the communication to suit the specific individual or the circumstances, and cannot provide support, empathy or coaching. Sure, you might use a tool to help you articulate something more clearly, or to clean up some language to make it more professional (Copilot, for example), but please don’t use AI to ‘manage your people’.

If your managers are not capable of managing their teams, coach them, or get better people leaders. Don’t rely on AI for this type of work.

Algorithmic management often treats employees as data points, failing to account for human nuance or context. There are some specific cases where AI has been used and created issues:

- A company used AI to set performance metrics, which were impossible to achieve. This led to the termination of some top performers because they couldn't meet the targets.

- Amazon warehouse workers are monitored by AI systems that track 'time off task' via scanners and cameras. This AI tool can automatically generate warnings or fire employees without human intervention, leading to high stress and dangerous working conditions. (I'm unsure if this tool is used in Australia. If so, I can only imagine the Fair Work matters Amazon Australia will face in time)

- Last year the Fair Work Commission found a case where a worker was unfairly dismissed by an AI-generated restructure decision, without the mandatory consultation process.

The takeaway: AI is not for performance management, addressing behavioural concerns or issues involving your people. The use of AI in these situations will result in the loss of good people, employee disengagement and total erosion of your workplace culture. People need to manage people.

AI and Fair Work Matters: What the Commission Is Saying

Just last week one of our team handled a Fair Work matter for a client. The Commissioner made a specific comment at the end of the hearing, thanking both the applicant and our client for not using AI to create their application or response. He explained that the number of unfair dismissal applications and general protections applications had risen drastically. People are using AI to ‘find out their rights’ and then write their applications, and employers have relied on AI to formulate their responses.

By the end of the 2025/26 financial year, the Commission expects its total workload to have grown by more than 70% in just three years. Across that period, it has also seen widespread use of AI-generated language in applications, particularly unfair dismissal and general protections matters.

The Fair Work Commission has found:

- AI tools can produce inaccurate or outdated information, leading to a surge in poor-quality, ‘unrealistically optimistic’ claims that waste commission time.

- AI use in submissions has led to fabricated or ‘hallucinated’ legal cases and unmeritorious claims or responses.

- While many use AI for drafting submissions, the Commission warns that relying on public gen-AI tools can lead to confidentiality breaches and incorrect advice.

The Commission has now introduced rules requiring parties to disclose if generative AI was used to prepare documents.

(Oh, and yes, Sophie had a positive outcome for her client in the matter last week! 😊)

In short, AI has a place in business. While I am scared about the impact on society in general, there’s no point in sticking our heads in the sand. Competitors will be using AI to improve productivity and profit, and to stay on a level playing field, most of us will have to, or want to, look at how AI can be used well in our businesses. However, using AI for anything people related is a guaranteed way to harm your business, so steer clear when it’s about people, and use your people to determine how to adopt AI into your business.

No AI was used in the writing of this article!

If you need some support in navigating the use of AI appropriately in your business, or with anything people related, please reach out. We’re always here to help!

Better not to. AI screening tools have a track record of being biased. Many businesses have been caught out. You also risk missing great candidates. The ones who don't tick the algorithm's boxes. Get a human to do it.

Please don't. Your people deserve to be managed by humans who actually know them. You can use AI to tidy up your grammar or spelling if you like, but the message should come from you.

They're not fans. The applications are usually too long. They've seen made-up legal cases, dodgy claims, and confidentiality breaches from public AI tools. The Commission now requires parties to disclose if generative AI was used to prepare documents.

Anywhere that doesn't involve assessing, managing or recruiting your people. Think admin tasks, content creation or streamlining your processes. Talk to your team (or ours) about where it might help. Just keep it well away from HR decisions.